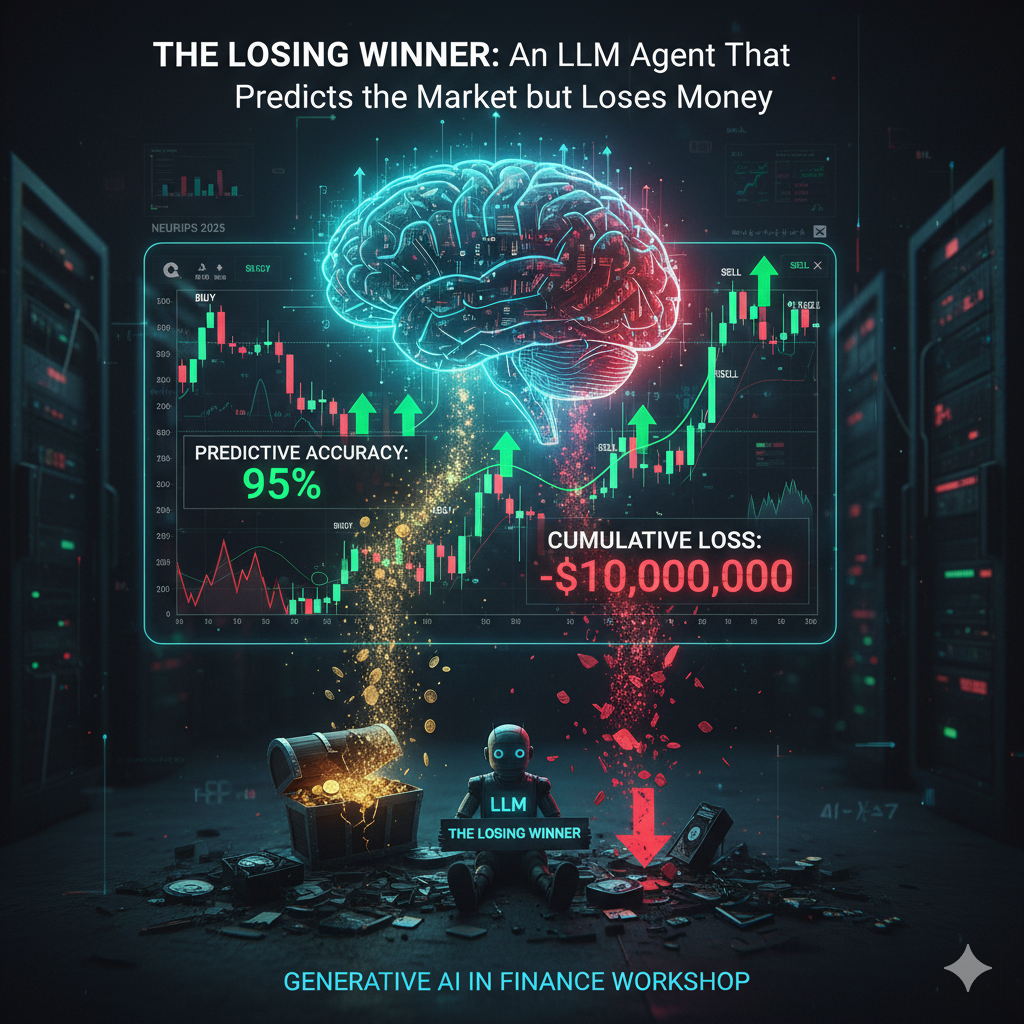

Fine-tuning an LLM for Bitcoin market state prediction improves accuracy but paradoxically worsens trading returns, exposing the dangers of proxy objectives and reward hacking in financial AI.

I am an incoming ECE PhD student at Purdue, starting fall 2026, advised by Ziran Wang. I am currently a research intern at KIST in Seoul, working on Embodied AI, with a focus on Vision-Language-Action models, under the mentorship of Hwasup Lim and Tackgeun You.

I received my M.S. from SNU, advised by Byoung-Tak Zhang. During my master's program, I also interned at SIMTech, A*STAR, where I was mentored by Haiyue Zhu. I received my B.S. from Yonsei University.

I'm interested in Embodied AI, Multimodal AI, and data-centric AI. Most of my research is about developing embodied AI from internet AI. Some papers with highlight are papers with main contributions.

Fine-tuning an LLM for Bitcoin market state prediction improves accuracy but paradoxically worsens trading returns, exposing the dangers of proxy objectives and reward hacking in financial AI.

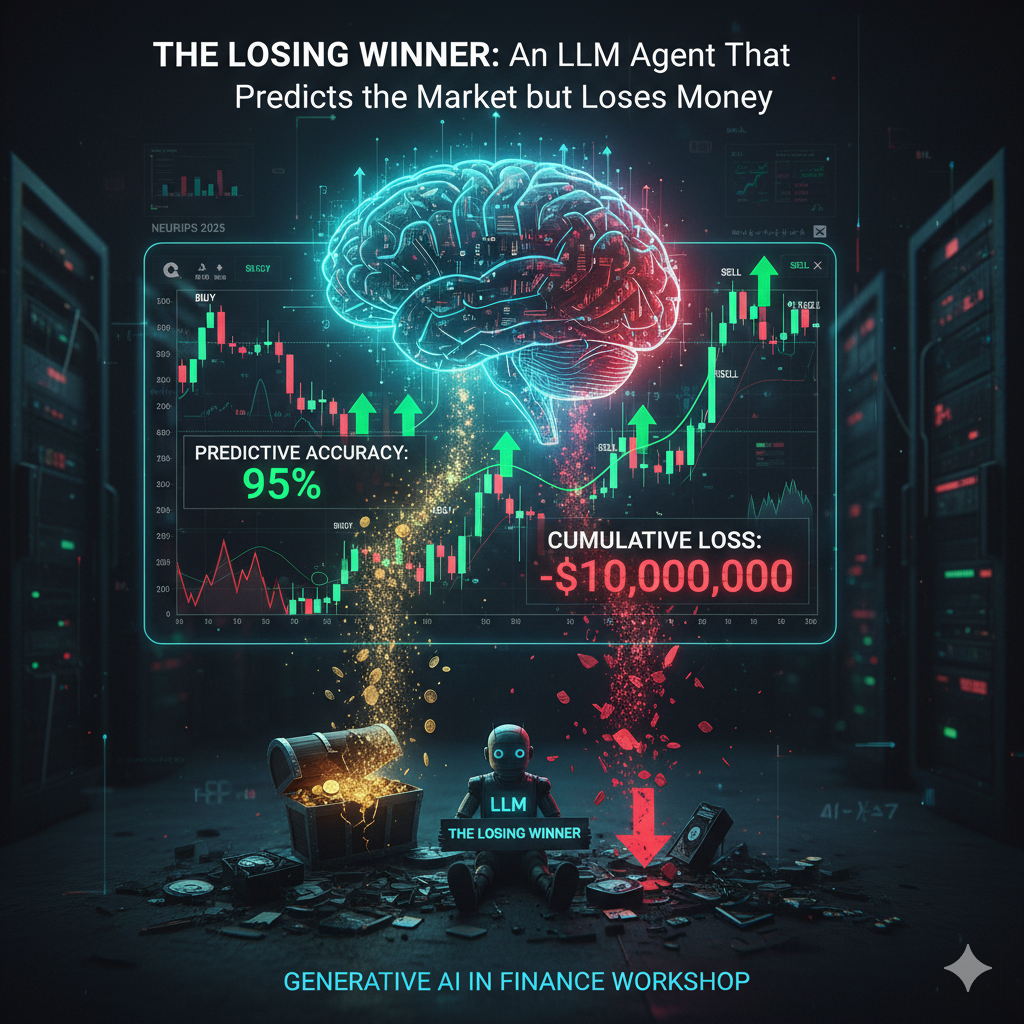

We propose Continual Vision-and-Language Navigation (CVLN) paradigm along with two methods for CVLN: Perplexity Replay (PerpR) and Episodic Self-Replay (ESR).

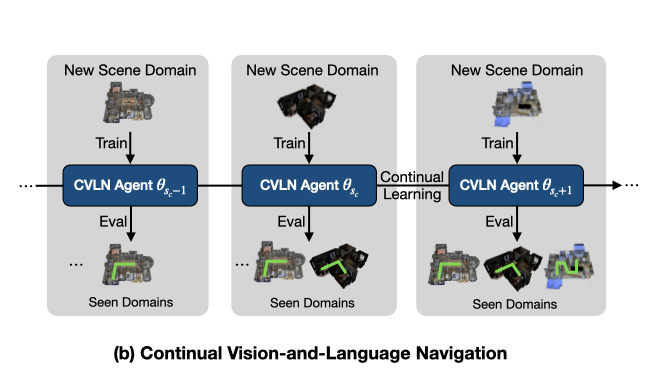

Ordinal bias leads action recognition models to over-rely on dominant action pairs, inflating performance and lacking true video comprehension even when challenged by action masking and sequence shuffling.

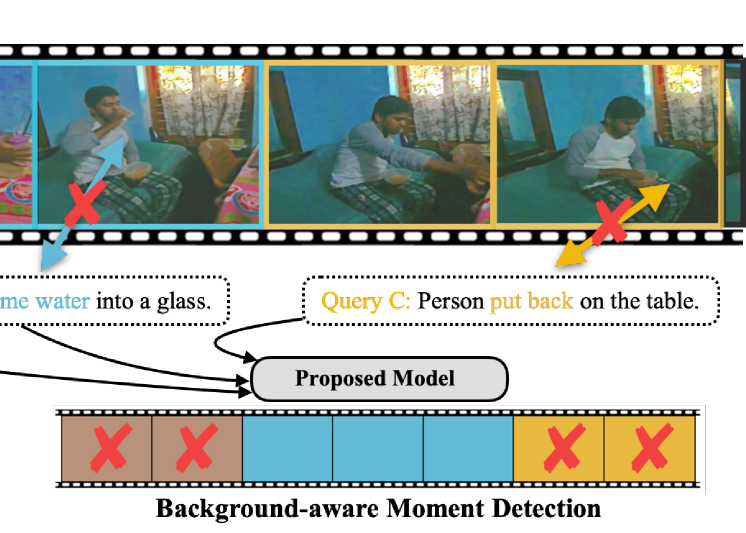

We propose Background-aware Moment Detection TRansformer (BM-DETR), which carefully adopts a contrastive approach for robust prediction. BM-DETR achieves state-of-the-art performance on various benchmarks while being highly efficient.

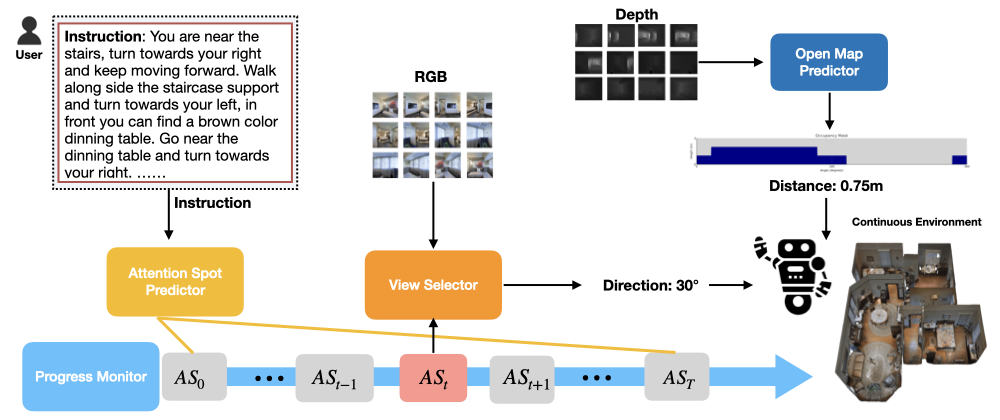

We propose the zero-shot Vision-and-Language Navigation with Collision Mitigation (VLN-CM), which takes low-level actions as an output while considering possible collisions.

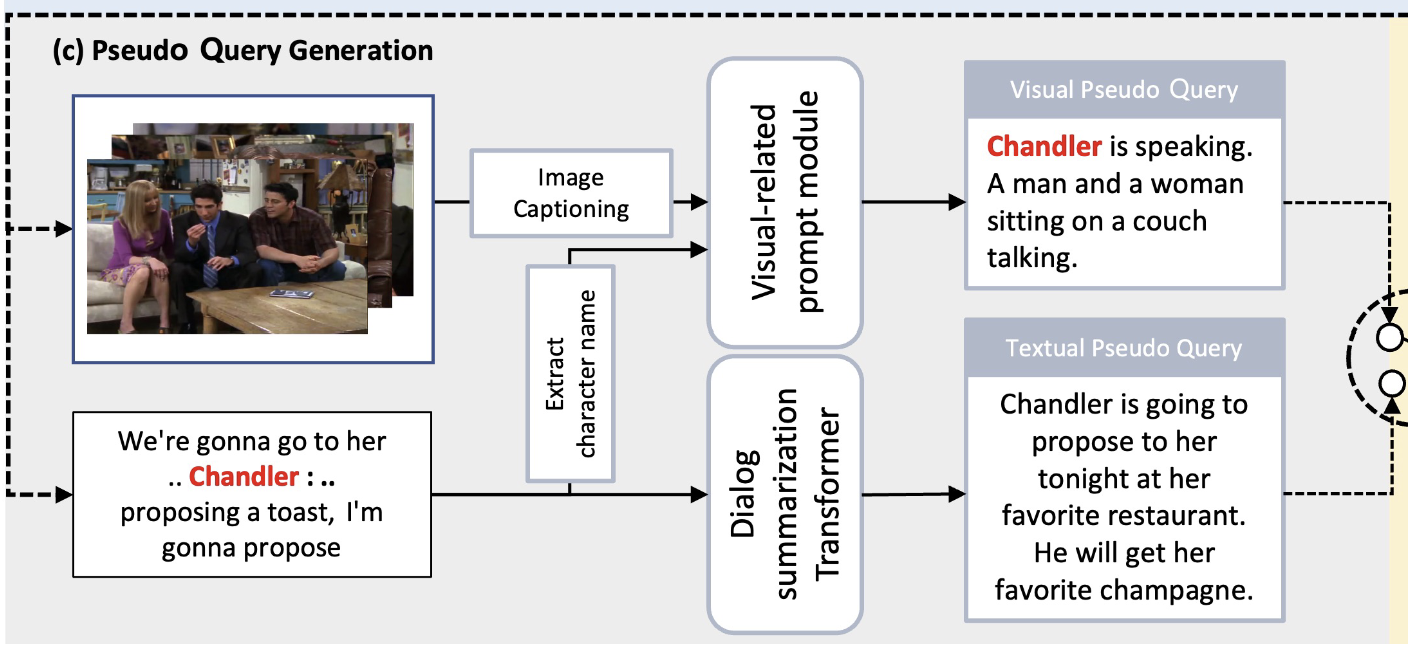

We propose a self-supervised learning framework: Modal-specific Pseudo Query Generation Network (MPGN). First, MPGN selects candidate temporal moments via subtitle-based moment sampling. Then, it generates pseudo queries exploiting both visual and textual information from the selected temporal moments.